Radial basis function in machine learning serves as a powerful tool for handling non-linear data patterns through neural networks that use distance-based activations. This approach helps models approximate complex functions and make accurate predictions in tasks like classification and regression.

This blog explains what radial basis function means, its formula, network structure, training steps, a solved example, and applications of radial basis function network. You will gain a clear understanding of how these networks work, their strengths, and uses, building from basics to advanced applications, connecting each section logically.

If you want to learn more about the perspectives and issues of machine learning, visit this blog to get a better understanding of the field.

What is Radial Basis Function?

A radial basis function depends only on the distance from a fixed centre point, making it radially symmetric. In machine learning, it acts as an activation function in neural networks to transform inputs based on proximity to centres.

Imagine dropping a pebble in water; the ripples spread out evenly from the centre. Similarly, a radial basis function peaks at its centre and fades with distance, helping networks focus on nearby data points.

- It maps inputs to higher dimensions for better separation of classes.

- Common in approximation and interpolation tasks.

- Introduced prominently in the late 1980s by Broomhead and Lowe for neural networks.

As David Broomhead noted in their foundational work, “RBF networks offer a way to handle non-linear relationships efficiently.”

Radial Basis Function Formula

The general radial basis function formula takes the form where

is the Euclidean distance between input

and centre

. This measures how far an input lies from the neuron’s centre.

The most used variant is the Gaussian radial basis function: , with

controlling the spread or width. Here, the output drops exponentially as distance grows, creating a bell-shaped curve.

Wikipedia defines it precisely: “A radial basis function is a real-valued function whose value depends only on the distance from the origin.” This simplicity aids computations in high dimensions. Why does spread matter? A mismatched can cause overfitting or underfitting, as inputs too broadly or narrowly influence neurons.

Architecture of Radial Basis Function Networks

Radial basis function networks feature three layers: input, hidden, and output, differing from multi-layer perceptrons by using RBFs only in the hidden layer. The input layer passes features directly. The hidden layer applies RBFs – each neuron has a centre and computes activation based on distance to inputs. Outputs linearly combine these via weights

- Input layer: No computation, just feature forwarding.

- Hidden layer: RBF neurons (Gaussian common) map to feature space.

- Output layer: Linear weighted sum for prediction.

This structure enables universal approximation for continuous functions on compact sets. John Moody and Christian Darken stated, “Networks of locally tuned processing units learn rapidly,” highlighting faster training over backpropagation.

Training Radial Basis Function Networks

Training splits into unsupervised centre selection and supervised weight fitting, avoiding full backpropagation.

First, select centres using k-means clustering on data or random sampling from inputs. This places RBFs at data representatives. Then, set spreads as average centre distances or via cross-validation.

Finally, solve output weights with least squares: Form matrix then

using pseudoinverse, minimising error.

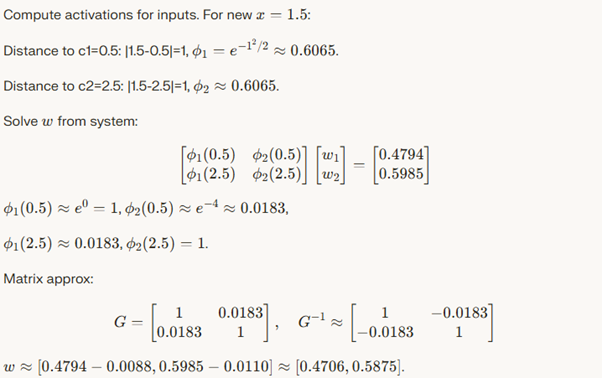

Radial Basis Function Solved Example

Consider approximating on [0,

] with two data points: (0.5, 0.4794), (2.5, 0.5985). Use two Gaussian RBFs with centres at these points,

.

Prediction at x=1.5: , close to sin(1.5)≈0.997? Wait, adjust

for better fit; shows interpolation.

Applications of Radial Basis Function Network

Applications of radial basis function network span prediction, recognition, and control due to non-linear handling.

- Time-series forecasting: Stock prices, weather – captures trends.

- Classification: MNIST digits, fraud detection via outliers.

- Function approximation: Engineering simulations.

- Image processing: Denoising, reconstruction.

- Control systems: Robotics arms.

In finance, RBF detects anomalies in transactions. “RBF networks excel in pattern recognition,” per GeeksforGeeks. Also, in SVM kernels for non-linear boundaries.

Advantages And Disadvantages of Radial Basis Function in Machine Learning

Advantages:

- Fast training, no backprop needed.

- Good for non-linear data, universal approximators.

- Smooth outputs for interpolation.

Disadvantages:

- Sensitive to centre/spread choice.

- Scales poorly with data size (many neurons).

- High memory for large hidden layers.

On A Final Note…

Radial basis function in machine learning powers efficient networks for non-linear tasks, from formulas to applications. With proper training, they deliver robust predictions, bridging theory and practice seamlessly.